DataOps: Enabling Business Agility

The DataOps development methodologies allow companies to build, deploy,

and optimize data-powered applications more quickly and more easily.

The agile approach involves identifying a problem to solve and breaking

it down into smaller pieces. The work is divided between a team of developers

for each piece, with each piece divided into a defined timeframe - a sprint -

that should include planning, development, testing, and implementation.

Companies using DataOps are not only doing well but are also outpacing

their competition. DataOps aims to optimize how an organization manages its

data to make better decisions.

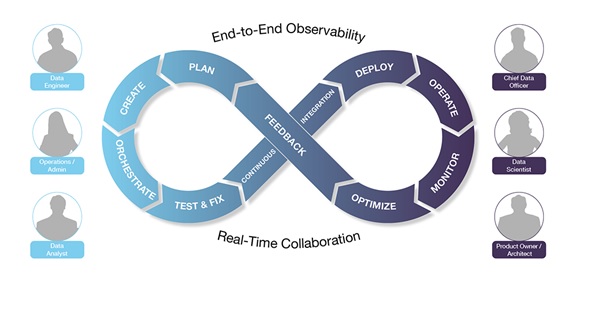

The practice of DataOps can lead to increased collaboration between data

scientists, data engineers, data analysts, operations, and product owners

across organizations. For DataOps to be successful, each of these roles must be

aligned.

Forrester research indicates that companies that integrate analytics and

data science into their operating models to bring actionable knowledge into

every decision are twice as likely to be in market-leading positions as those

that do not.

THE DATAOPS LIFECYCLE IN 10 STEPS

There is more to DataOps than making existing data pipelines work efficiently, getting reports and AI/ML outputs and inputs to appear as needed, etc. DataOps includes all aspects of data management.

From raw data to insights, DataOps is a journey for data teams. As much

as possible, DataOps stages are automated to shorten the time to value. The

steps below show the full lifecycle of a data-driven application.

Plan: Define how data analytics can be used to resolve a business challenge. Identify the data sources, processing steps, and analytics steps necessary to solve the problem. Decide on the right technology and delivery platform, and then specify the budget and performance requirements.

Create: Design and implement the data pipelines and application programming code necessary for ingesting, transforming, and analysing the data. SQL, Scala, Python, R, or Java are among the programming languages used to develop data applications, depending on the desired outcome.

Orchestrate: Create an effective system that connects the stages

needed to create the desired effect. Code execution should be based on when the

results are needed; when the most cost-effective processing is available; and

when related jobs (inputs and outputs, or steps in a pipeline) are running

simultaneously.

Test & Fix: Simulation of the code running on data sources in a

sandbox environment. Find and eliminate data pipeline bottlenecks. Check for

accuracy, quality, efficiency, and performance before submitting results.

Continuous Integration: The revised code should meet established

criteria to be promoted into production. Accelerate improvements and reduce

risk by incrementally integrating the latest code and data sources.

Deploy: The best scheduling window for a job should be determined by

SLAs and budget. Ascertain whether the changes have improved the process; if

not, roll them back, and revise.

Operate: The code runs against data to resolve the business problem,

and stakeholder feedback is solicited. Determining deviations from SLAs and

fixing them to assure compliance.

Monitor: End-to-end process monitoring, including data pipelines and

code execution. The data operators and engineers use tools to observe how code

runs against data in a busy environment and to troubleshoot any issues that may

occur.

Optimize: To ensure high-quality, cost-effective, and business-focused

results for data applications and pipelines. To optimize the application's

performance and effectiveness, team members optimize the app's resource usage.

Feedback: Data team members gather feedback from all stakeholders,

including app users and line of business management. During this phase, results

are evaluated against success criteria and input is sent to the planning phase.

DataOps has two characteristics that apply to every stage of the

lifecycle: end-to-end observability and real-time collaboration.

END-TO-END

OBSERVABILITY

Observability from beginning to end is essential for the delivery of

high-quality data products on time and on budget. Data-driven applications must

be able to measure key KPIs, including the data sets they process and the

resources they consume. Metrics include application/pipeline latency, SLA

score, error rate, result correctness, cost of a run, resource usage, data

quality, and data usage.

This visibility is needed horizontally - across every step and service

in the pipeline - and vertically to understand whether the problem is with the

application code, the service, the container, the data, or the infrastructure.

Observability across the entire data lifecycle provides teams with a reliable

and precise means of collaborating around data.

REAL-TIME

COLLABORATION

Working on short sprints, for example, allows teams to work in a

rhythm-based on real-time collaboration. DataOps lifecycles help teams identify

the stage in which they're working, and to reach out to other stages to solve problems,

both at the time and soon.

Collaboration in real-time requires open discussion of results as they

occur. Every discussion in the observability platform is grounded in shared

facts derived from a single source of truth. Real-time collaboration is the

only way for a relatively small group to deliver high-quality products

regularly and over time.

CONCLUSION

By applying a DataOps approach to their work and paying attention to

each step in the DataOps lifecycle, data teams can increase their productivity

and the quality of the results they provide to the organization.

In addition to increasing the ability to deliver predictable and

reliable business value from data assets, the business as a whole will be able

to make more and better use of data in decision-making, product development,

and service delivery.

In many cases, advanced technologies, such as artificial intelligence

and machine learning, can make organizations more competitive and lead to

significant revenue increases.